Snowflake Training in Hyderabad

Master Snowflake Training in Hyderabad with our industry-focused 30-day program designed by working professionals. Learn cloud data warehousing, data integration, ELT pipelines, performance optimization, cost management, and real-time project implementation through hands-on practical sessions. This Snowflake course helps beginners and IT professionals build job-ready skills for high-demand data engineering and analytics careers.

For more Info: +91 8309431893

Sign up for a Free Demo

In this page you Get Details About Snowflake Training in Hyderabad, scroll Down to Know More.

HIGHLIGHTS

Extensive Curriculum

A comprehensive course covering from foundational knowledge to advanced topics

We’ll ensure your resume effectively showcases your expertise to potential employers.

We share your Resume to potential employers while we provide Career guidance, Mock interviews & more

Snowflake Training in Hyderabad

Want to attend Demo Classes for Free? You can

What is Snowflake?

Snowflake is your cloud-based data platform for storage, processing, and analytics. Think of it as a powerful, scalable data warehouse in the cloud that lets you store massive volumes of structured and semi-structured data and run high-performance queries without managing infrastructure.

Why learn Snowflake Training in Hyderbad?

For students in India seeking a thriving career in the data domain, learning Snowflake

could be a game-changer. Here’s why:

High Demand & Growth: India’s digital transformation boom has skyrocketed the demand for data integration skills.

Snowflake is Microsoft’s leading tool in this space, making it a valuable asset for employers.

Lucrative Job Prospects: Certified Snowflake professionals command premium salaries in India, often exceeding six

figures annually. The increasing adoption of cloud solutions further fuels this demand.

Versatility & Flexibility: Snowflake goes beyond basic data movement. You’ll build complex data pipelines, transform

information, and work with diverse data sources – a versatile skillset in high demand.

CURRICULUM

- Free Account

- Student Account

- Pay As You Go

- Creation of Accounts

- Data Warehouse Basics – OLTP vs OLAP, DW Characteristics, Data Lake vs DW

Dimensional Modeling – Star Schema, Snowflake Schema, Fact & Dimension Tables

ETL vs ELT – Modern ELT approach & Why ELT in Snowflake

Introduction to Snowflake – Overview & Cloud Support (AWS, Azure, GCP)

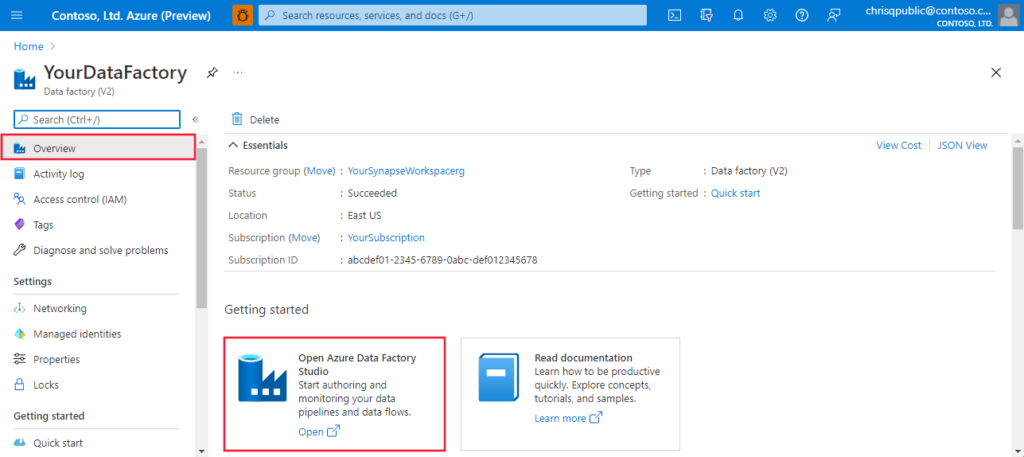

Snowflake Editions & Account Setup – Standard, Enterprise, Business Critical, Snowsight - Azure Resource Manager vs. classic deployment

- Azure to Manager or Delete Resource Group and Resource

- Pl

- Azure Subscription Maintenance

- anning and Managing Cost

- Azure Support Option

- Azure Service Level Agreement

- Azure Cost Analysis and Restriction

- Azure Cost billing Report

- SQL Basics – DDL, DML, Filtering & Sorting

Joins & Aggregations – GROUP BY, HAVING, Joins

Set Operators & Subqueries

Window Functions – ROW_NUMBER, RANK, DENSE_RANK

MERGE & QUALIFY – Upsert scenarios

Stored Procedures & UDFs – Basics

SQL Case Studies & Query Tuning

- Database Objects – Permanent, Transient, Temporary Tables

Views – Regular, Secure, Materialized

External Tables & Stages

File Formats – CSV, JSON, Parquet

Data Loading – COPY command & best practices

Semi-Structured Data – VARIANT & JSON Flattening

Continuous Loading – Snowpipe

- Streams – CDC & Change Tracking

Tasks – Scheduling & Automation

SCD Implementation – Type 1 & Type 2

Performance Optimization – Caching, Micro-partitions, Clustering

Time Travel & Fail-safe

Zero Copy Cloning

Security & Governance – RBAC, Roles, Secure Sharing

- ETL / ELT Pipelines – Incremental & Historical loads

Error Handling & Monitoring

Real-time Pipelines – Streams, Tasks & Snowpipe

- Python Basics – Pandas & Jupyter

Python + Snowflake – Connector & Query Execution

Snowpark – DataFrames, UDFs, Stored Procedures

AWS Integration – S3 Connectivity & External Stages

Snowpipe with S3 – Event Notifications

Migration to Snowflake – RDBMS & Hadoop

Migration Frameworks & Best Practices

DBT Fundamentals – Project Structure, Sources, Seeds

DBT Models & Tests – Incremental models

DBT Snapshots, Macros & Hooks

- Introduction about SSIS tool

- Installation Visual Studio SSDT different Version for SSIS ETL

- Creating New project and Modifying the exciting SSIS ETL project

- Creating different types of packages

- Control Flow Task and its different type of components and transformation

- Different Source and Destination for data loading

- Full Load development Using SSIS package

- Incremental process Using Lookup Transformation, SCD and CDC Components

- Automation or Scheduling the exiting SSIS ETL packages for daily load

- SSIS package Deployment model Using Ispac file and Manifestfiles

- Project Deployment model, Package Deployment and File System model fo SSIS ETL Packages

- Creating jobs to schedule the exiting SSIS ETL packages and Configurations

What is the advantages of Snowflake (SnowflakeTraining in Hyderabad)

1) Separate Compute & Storage

Snowflake’s architecture separates compute from storage. You can scale each independently, which means:

Faster queries during peak loads

Pay only for what you use

No performance conflicts between teams

2) High Performance with Virtual Warehouses

Its virtual warehouses let multiple users run queries simultaneously without slowing each other down—ideal for analytics teams and BI tools.

3) Supports Structured & Semi-Structured Data

Query JSON, Avro, Parquet, XML alongside tables using standard SQL. No complex transformations required.

4) Zero Infrastructure Management

No servers, indexing, partitioning, or tuning. Snowflake handles maintenance, optimization, and upgrades automatically.

5) Secure & Easy Data Sharing

Share live data securely across departments, partners, or clients without copying files—unique capability that many platforms lack.

6) Multi-Cloud Availability

Runs on AWS, Azure, and Google Cloud. Skills are portable across cloud providers and projects.

7) Time Travel & Fail-Safe

Recover deleted/updated data with Time Travel and Fail-Safe features—very useful for real-time production environments.

8) Built-in Data Lake + Data Warehouse

Acts as both a data lake and a warehouse, reducing the need for multiple tools.

9) Strong Demand in Hyderabad Job Market

Hyderabad’s IT companies are actively adopting Snowflake for analytics and data engineering, creating strong demand for trained professionals.

10) Easy Integration with BI & ETL Tools

Works smoothly with tools like Power BI, Tableau, and Azure Data Factory.

Result: Learning Snowflake in Hyderabad gives you practical, in-demand cloud data skills that translate directly into data engineer, Snowflake developer, and analytics roles.

Register for SNOWFLAKE Training in Hyderabad.

Frequently Asked Questions

Snowflake is a fully managed, cloud-native data platform used for data warehousing, data lakes, data engineering, and analytics.

It runs on top of major clouds and lets you store massive volumes of structured and semi-structured data, then query it with high performance—without managing servers or infrastructure.

Its unique design separates compute and storage, so teams can scale performance independently and pay only for what they use.

DABI focuses on real-time project scenarios, hands-on labs, and interview-oriented preparation using Snowflake in practical workflows.

Yes. The program starts from basics (SQL & data concepts) and moves to advanced Snowflake topics step by step.

Yes. You’ll practice loading semi-structured data, building warehouses, writing queries, and optimizing performance in lab sessions.

Yes. Trainers guide you with certification syllabus coverage, mock tests, and important questions.

Yes. DABI provides resume help, mock interviews, and local job referrals aligned with Hyderabad’s IT market.

You’ll learn how Snowflake connects with BI and ETL tools used in real companies for analytics workflows.

We’ve got you covered. We record Daily Live Classes and share the records everyday. You can watch at your own time, practice and ask the trainer any queries you have

The core purpose of Snowflake is to provide a fully managed, cloud-native platform where organizations can store, process, analyze, and securely share data at massive scale—without managing infrastructure.

Scale compute (virtual warehouses) and storage independently for performance and cost control.

Run many concurrent queries without contention; auto-scale up/down based on workload.

Query JSON, Parquet, Avro, XML alongside relational tables using SQL.

No indexing, partitioning, or server management. Automatic tuning, updates, and optimization.

Share live datasets across teams or partners without copying data.

Recover historical data and protect against accidental deletes/updates.

Snowflake skills are valuable for anyone working with data, analytics, or cloud platforms.

Learn to build ELT pipelines, manage warehouses, and optimize performance at scale.

Query large datasets with SQL and connect BI tools for dashboards and reports.

Modernize pipelines by loading raw data and transforming it directly inside Snowflake.

Extend SQL expertise into a high-demand cloud data platform.

Understand how data platforms run on AWS/Azure/GCP and manage workloads efficiently.

Start a career in data engineering and analytics with an in-demand platform.

STUDENTS SAY

Snowflake Training in Hyderabad

CERTIFICATION

Upon successful completion of ADF Course, you’ll be eligible for a course completion certificate from us, recognizing your achievement and acquired skills.